47 seconds of attention, 20 AI agents: the math needs orchestration.

Engineers cap at 5 supervised agents. The rest of us cap at 47 seconds. Both ceilings lift by stealing one layer from devs.

It’s tempting to imagine 100 AI agents running in parallel, around the clock. That’s the idea I keep flinching toward when the day’s getting away from me. There’s one problem with it: my own capacity is the limit. Most agents can validate their own work, but enough still need me to look — and the engineering world has solved it, so, let’s borrow it for a while.

Last Tuesday, 11:03 AM. Three apps open across two screens — Claude, Cowork, 6 terminal tabs running Claude Code. By 11:14 I’d added two more — competitor research in Perplexity, and a browser extension to sort my inbox. Ten active surfaces — most of them demanding I read, edit, or judge their output. I was hitting accept the way you scroll a feed when you’ve stopped reading.

(A little context: I’m a PM and founder, not an engineer. I open Claude Code, Cursor, and a few terminal tools — the dev-adjacent comfort you pick up after a decade of shipping products with engineers. One tier above most non-coders, several tiers below any developer. This article is the playbook from that in-between seat.)

I’m not the outlier. Even at OpenAI, engineers cap out around three to five. Alex Kotliarskyi, posting from inside the company on the day they open-sourced Symphony:

At the same time knowledge workers’ attention on a single screen has collapsed to ~47 seconds, down from 2.5 minutes in 2003 — Gloria Mark’s UC Irvine research (her 2023 book Attention Spangathers the data). Stack AI assistance on top of normal app-toggling, and the supervision tax compounds.

It’s time to fix at least AI supervision problem. Engineers called it orchestration.

§2 — Devs solved this for devs

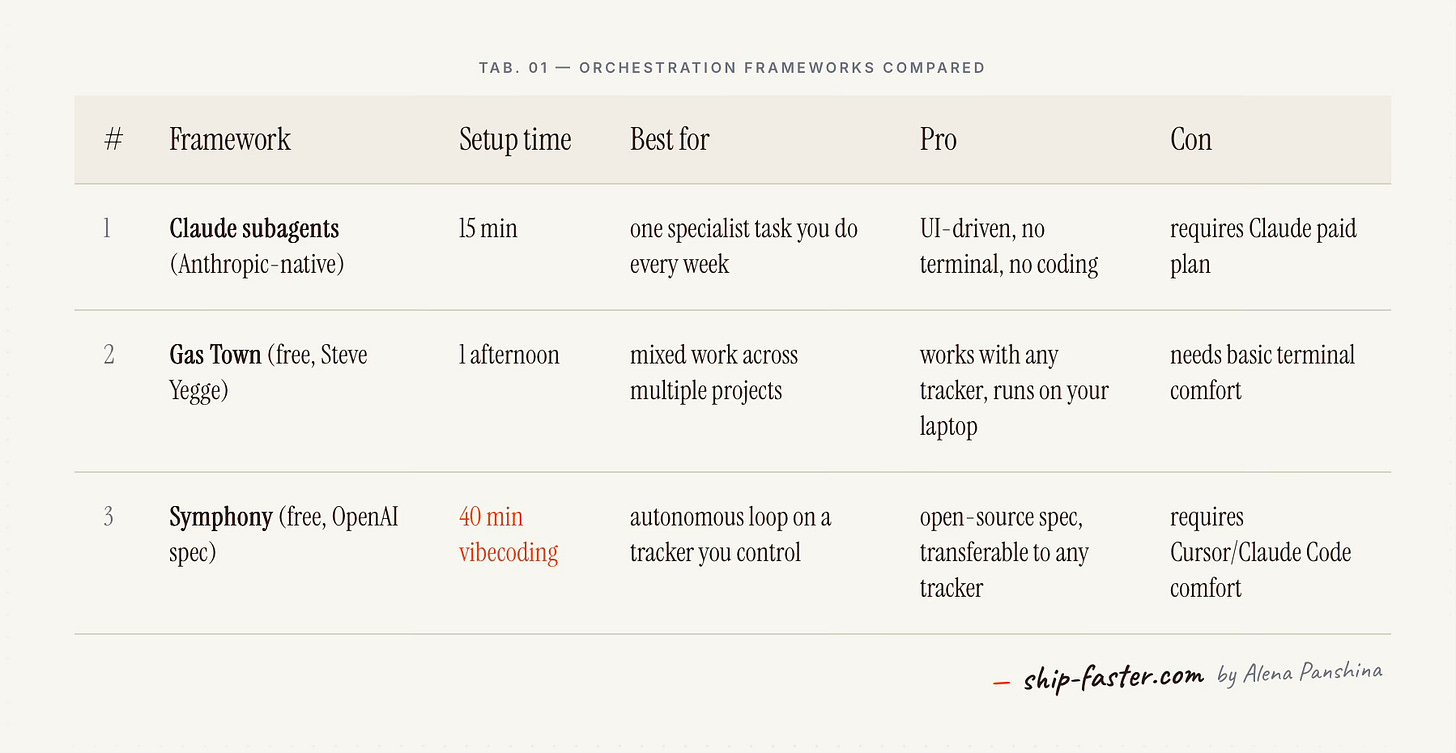

Three labs shipped a working orchestration layer this spring. Different stacks, different vocabularies, the playbooks I crib in §5.

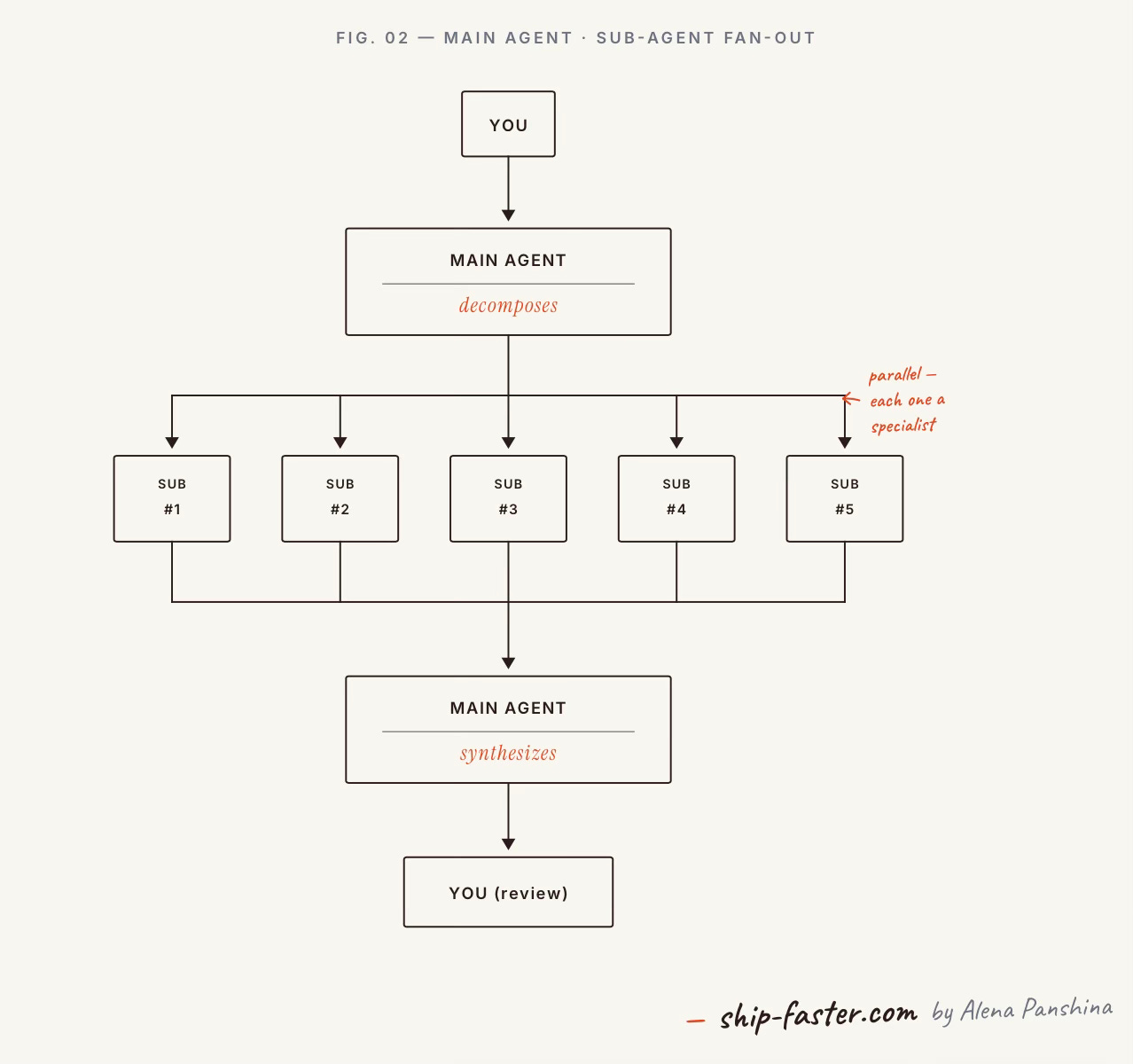

Anthropic — Claude Code with parallel subagents. Cat Wu, Head of Product for Claude Code and Cowork at Anthropic, described on Lenny's podcast (April 23) how her team builds: a main agent writes a to-do list, then spins up sub-agents in parallel against it.

Steve Yegge — Gas Town. Yegge — long-time engineer, ex-Google, ex-Amazon, ex-Sourcegraph, now building Gas Town full-time — released Gas Town as a free, open-source supervisor that manage your tasks. One supervisor ”the Mayor”), a queue of typed tasks, one workspace folder per task, a review gate before done.

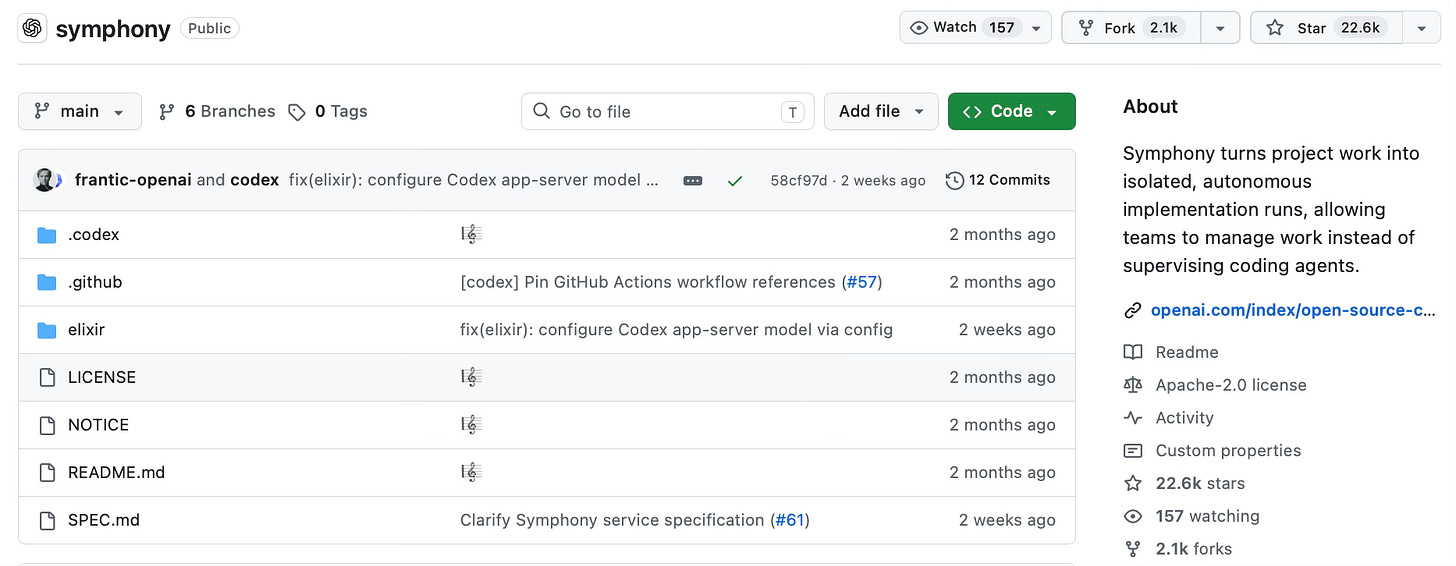

OpenAI — Symphony. Symphony shipped April 27 — the OpenAI announcement, Help Net Security, InfoWorld. Pitch in one line, from the README: “Symphony turns project work into isolated, autonomous implementation runs, allowing teams to manage work instead of supervising coding agents.” 22.2k stars ten days in. Sanchit Vir Gogia named the gap in InfoWorld:“Generation scales effortlessly, validation does not.”

§3 — What orchestration is

Orchestration is the layer that decides which agent does which task, when, and in what order. Not a tool — the layer above the tools. Devs use the conductor metaphor for a reason: a conductor doesn’t play any instrument. The conductor decides which player comes in when, listens for what’s off, calls a cue.

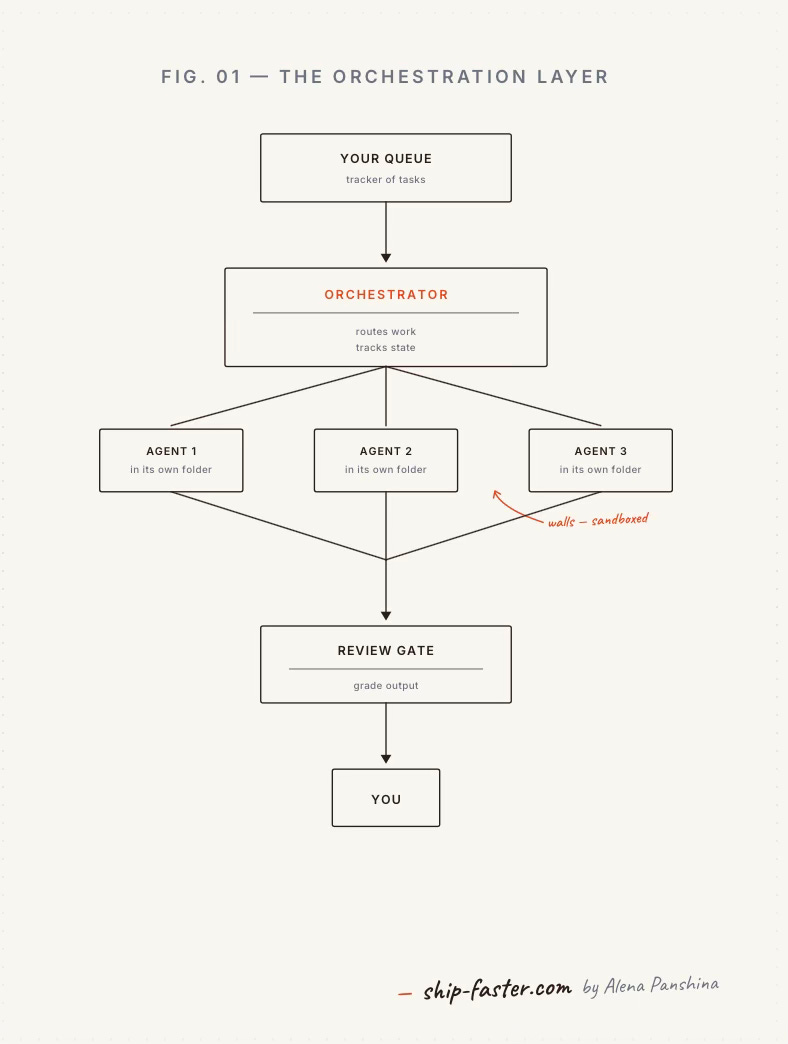

An orchestrator does five things you’d otherwise do in your head:

Holds the queue. One list of work, with type tags. Everything goes here first.

Routes work. Picks which agent (or which kind of agent) handles each task — and how many run at the same time.

Walls each task off. Every task gets its own folder. The agent can’t read or write outside it. (Devs call this sandboxing; you can ignore the word, the idea is walls are real, the agent can’t see your desktop.)

Runs the review gate. Every output gets graded — by you or by another agent — before it flows on.

Tracks state. Knows what’s running, what’s pending, what’s done. Retries failures automatically.

When you have one AI tool open, you don’t need this layer — you are the layer. You hold the queue in your head, decide which prompt goes to which tab, read every output, judge if it’s good. Fine.

When you have four tools open and ten agents running, the layer in your head breaks down. The fix isn’t more discipline. It’s giving the layer somewhere to live outside your head.

Monday.com put it cleanly: “Think of it like a conductor leading an orchestra: each musician has a specialty… but the conductor ensures they play in harmony, come in at the right moment, and produce something cohesive.”

You’ve already done this without naming it. I sent a research brief to a contractor on Monday: do user research on agent-creation workflow pain — five interviews with PMs running AI agents daily, return as a clean summary by the end of the week. Brief = a typed task on a queue. Her working folder = her own private folder. Her draft = artifact. My Friday review = gate. You have been running orchestration on humans for years. The new piece is that one of the contractors is now an AI helper and another is a Claude Code.

Inside that five-piece system, every individual task runs the same four-beat loop: queue → its own folder → agent works alone → you grade at the gate. The whole thing rests on one editorial unit: the done-when line. If you can’t write the done-when line in a sentence, the task isn’t ready to be automated. The criterion is the contract.

§4 — Where it works for non-devs

Three operators worth meeting: a Claude Code PM at Anthropic shipping with parallel subagents; a solo non-coder running a 15-agent stack alone; an in-house PM team running a six-agent portfolio at a real-world SaaS. Same shape, three tiers.

Aaron Sneed — The Council (solo)

Aaron Sneed runs a defense-tech company alone with 15 custom GPTs he calls “The Council” — chief of staff, legal, finance, HR, comms, security/compliance, engineering, supply chain, field ops, and others. Stack: ChatGPT Business plus custom GPTs. Workflow: he drops a request-for-proposal into the chat and every agent weighs in at once. A chief-of-staff agent sets priority — legal, compliance, and security get the loudest vote. The legal agent does upfront prep, then real legal work goes to his human lawyer.

On the training discipline, from the February 2026 Business Insider profile: “I don’t want a bunch of yes agents. I trained them purposefully to give me pushback because I’ve learned that they naturally want to agree with me.”

Numbers as he reports them: around 20 hours saved per week, “and that’s a very conservative estimate.”

Nick Ruggiero — Project44 Autopilot (in-house team)

Director of PM at Project44, a supply-chain SaaS; runs the Autopilot agent portfolio with a small co-PM team. Stack: six named agents — Freight Procurement, Disruption Management, Network Operations, Exceptions Management, Slot Booking, Carrier Onboarding — sitting on a decade of platform context. Workflow shape, per Project44’s April 8 launch announcement: an orchestration layer “manages the sequence: what runs first, what feeds into what, when to escalate, and how to synthesize outputs into a coherent result.”

Numbers as Project44 reports them at the April 2026 Decision44 customer event: Freight Procurement Agent — 4.1% cost reduction, 75% faster sourcing, 70% less manual coordination. Cargo Theft Agent — intervention time from 40 minutes to 12. Slot Booking Agent — 17% detention reduction, 10 hours of weekly labor saved per user.

§5 — The three frameworks, simplest to hardest

Before any framework: the cheapest move on the list is telling Claude when to use subagents on its own.

Step 0 — drop a subagent primer in your CLAUDE.md

If you use Claude Code at all, this is two minutes that pays back daily. Open ~/.claude/CLAUDE.md(the ~ is shorthand for your home folder; create the file if it doesn’t exist) and paste the block below. Claude reads it on every session, and from then on it’ll spin up subagents on its own when work is separable, and stop when work needs one voice.

# Subagents — when to use them

You can spin up subagents for side work that would otherwise flood my main context (long file reads, log digs, big searches, repeat tasks). Run a subagent in its own context, return only a summary.

## When to spin one up

- A side task would dump 500+ lines I don't need to read myself.

- I keep asking for the same kind of work (cleaning meeting notes, summarizing email threads, pulling stats from a CSV). Suggest saving it as a named subagent: `email-summarizer`, `meeting-notes-cleaner`, `csv-skimmer`.

- The ask has 3+ separable pieces (different files, different sources, no shared state). Run them as parallel subagents, then merge.

## When NOT to spin one up

- The output must be one coherent thing — one document, one slide deck, one post. Use a single writer; coordinating voice across subagents is worse than doing it yourself.

- The task is small enough that the round-trip costs more than the context it saves.

- I've only asked for it once. Wait for the second time before suggesting a saved subagent.

## Defaults

- Read-only by default. A subagent gets Read, Grep, Glob, and WebFetch (read files, search files, list files, fetch a web page) unless I say otherwise.

- Never give a subagent Bash, Edit, or Write without asking me first.

- Always summarize back. Don't dump raw subagent output into the chat — give me the answer plus the one or two file paths or quotes that back it up.

- If a subagent fails or returns nothing useful, tell me. Don't paper over it.

That’s the philosophy on a page. The three frameworks below are how you wire it to a queue, a tracker, and a review gate.

Framework 1 — Claude subagents — start with one specialist, then orchestrate

Gate: this is the Claude Code product, not Claude.ai. Paid tier only (Pro / Max / Team / Enterprise) — the free Claude.ai chat does not include subagents. If you only have Claude.ai, skip to Framework 2 or 3.

Two stages, same UI. Stage one = one specialist you set up once and reuse weekly. Stage two = a main agent that dispatches several specialists in parallel and merges results — the supervisor pattern Cat Wu. Ship stage one first.

What you’ll need: Claude Pro/Max/Team/Enterprise, Mac or Windows, 15 minutes, one repeatable 20-min task you’d normally do by hand.

Stage one — set up your first specialist

1. Create the agent. Type /agents → Library → Create new agent → Generate with Claude. Describe your specialist in plain English:

“An email summarizer. Reads emails I paste in, returns sender + ask + urgency. No fluff.”

Claude writes the name, description, and full instructions. You write zero code.

2. Set permissions. In the tool checklist, leave Read-only tools checked and uncheck everything else. Save.

3. Run it. Drop feedback_week.txt into the folder and type:

“Use the summarizer on

@feedback_week.txt— sender, ask, urgency.”

Five short blocks come back in chat in under a minute.

The agent is permanent. Same prompt next Friday returns the same flow over new emails.

Stage two — orchestrate in parallel. Once you have two or three specialists saved, fire them at the same job. In Claude Code chat, type the multi-part task in plain English and tell it to use the subagents. Example:

Decompose this into parts and dispatch the right subagents in parallel: take all customer emails in

~/Documents/feedback_april/, send each one through email-summarizer; in parallel run meeting-notes-cleaner over~/Documents/calls/; run sent-folder-skim over~/Sent/april.mboxfor what I committed to. Then merge into one weekly digest with three sections.

Claude builds a to-do list, dispatches each saved subagent in parallel, and merges the answers. The activity panel shows one row per subagent so you can watch them run. Final answer comes back as one digest in chat. The cap on you stays at 3-5; the cap on what you can ship rises because the main agent does the watching.

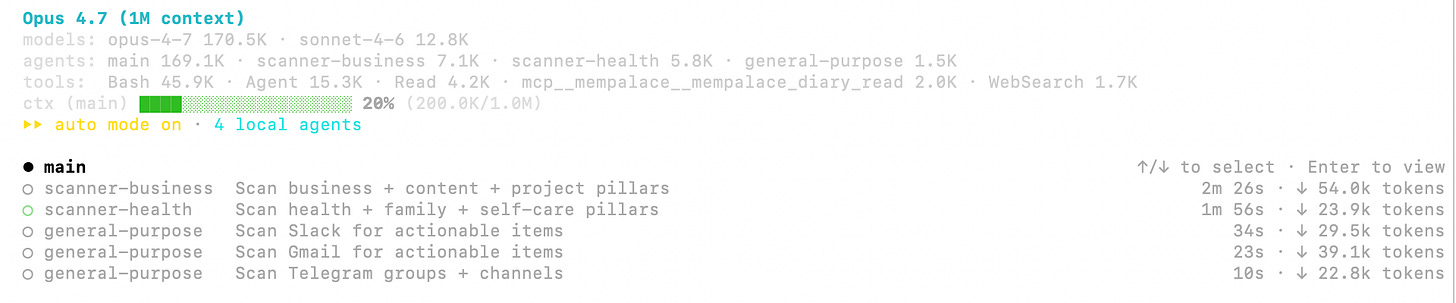

My own use: my broader inbox scan. Looks like this:

Want me to go deeper on Framework 1 — a real subagent walkthrough, screenshots, the prompts I save? Drop a comment and I’ll write the follow-up.

Framework 2 — Gas Town — hire a Mayor for your agent town

Skim path. This is the longest section in the article. If “open Terminal” is a hard no for you, jump to Framework 3 (no install, vibecode against your existing tracker) or §6 (the failure modes, useful regardless of framework). If you’ve ever opened Claude Code and want a daily town, the rest of F2 is yours.

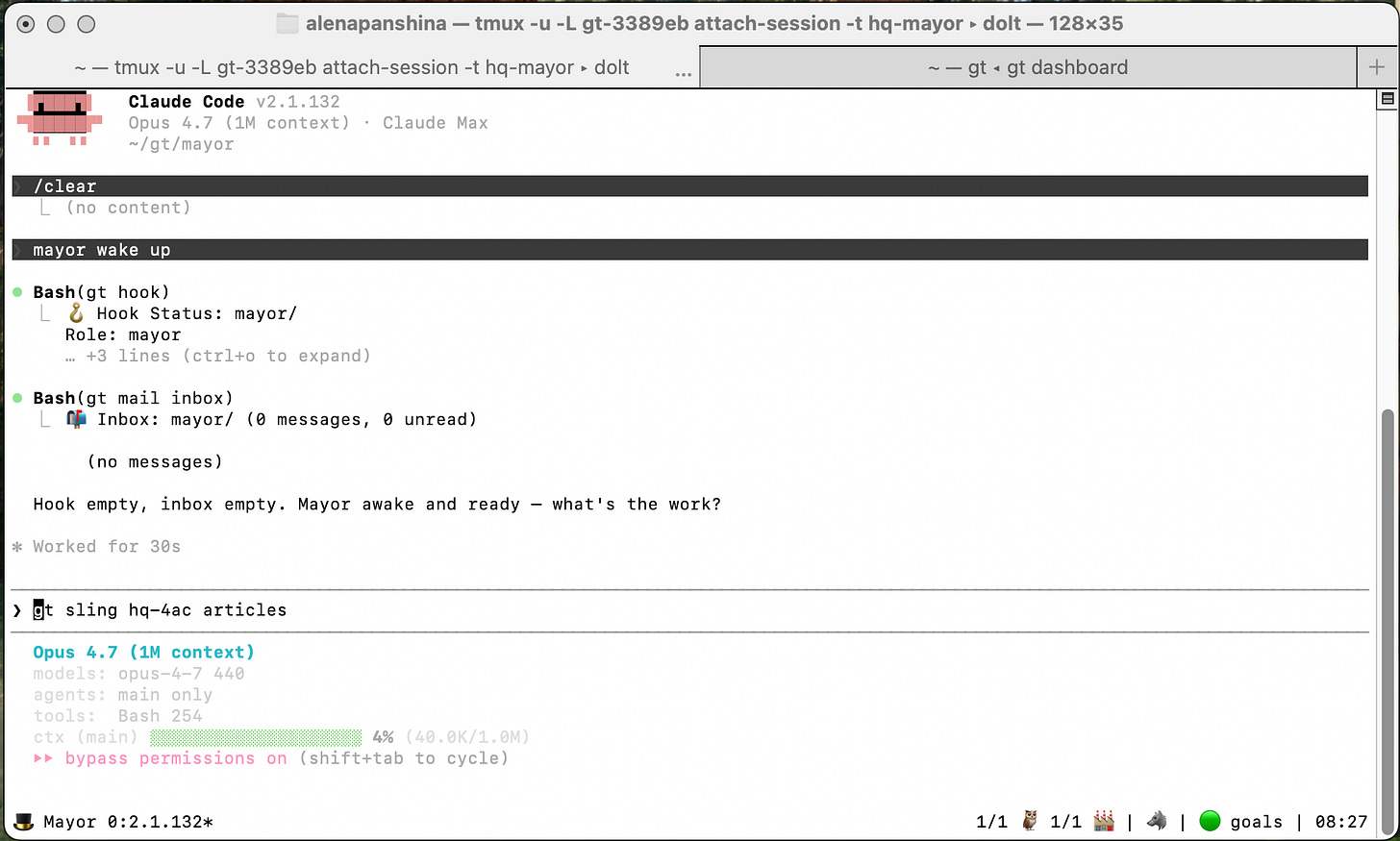

Gas Town is an open-source orchestrator from Steve Yegge. The shape: you tell the Mayor what needs to happen; the Mayor hands jobs to subagents, watches them, pings you when something’s ready for review.

Install (one time):

brew install gastown(install Homebrew first if missing)gt install ~/gt --git— creates your town foldercd ~/gt && gt mayor attach— Mayor wakes up

Don’t edit config files the first week. Defaults are sensible.

VERIFY — current GT README quick-start example uses ~/git as the dir; confirm ~/gt works the same on a fresh install.

How it works inside

No magic — just folders, files, and background terminal windows talking to each other.

The town is a folder. ~/gt/ on your Mac. A mayor folder, an inbox folder, a rigs.json file that lists every project the town knows about. Open it in Finder right now. Nothing is hidden in the cloud.

Tasks are little ticket files. When you brief the Mayor, it writes a task (GT calls it a bead) — a small structured record with a title, brief, status (ready, in-progress, done), and owner. The whole queue is just a sortable list of these. That’s why “what’s ready?” is instant: it’s a folder, not a server call.

The Mayor is one Claude in a sticky terminal. gt mayor start opens a background terminal window named hq-mayor and boots a Claude session inside. That window stays open between your visits. You attach, talk, detach. The conversation persists.

Subagents are separate Claudes in their own folders. When the Mayor hands a task to a subagent, GT clones the project into a fresh subfolder under ~/gt/ (GT calls each project’s folder a rig), opens a new background terminal, and starts a brand-new Claude inside. Different conversation, different memory, different files it can touch. It cannot reach outside that folder — Mac file permissions enforce that. No “and then it edited my desktop” stories.

Two flavors of subagent. A fresh subagent (GT calls it a polecat) is spun up per task and thrown away when done. A named subagent (the crew) is one you keep for recurring jobs.

It’s free because there’s no server — open-source code, your laptop, your files. The only thing you pay for is the Claude Code subscription that does the actual writing.

Reading the screen

🪝 Hook Status: mayor/— The hook is the one piece of work pinned to the Mayor right now. Empty now → empty in the line. When work is hooked, the task title appears here. The hook survives if you close and re-attach the terminal — it’s the durability anchor, not a one-shot prompt.“Hook empty, inbox empty” — Nothing pinned, no unread messages from subagents. When you brief a task, you’ll see it get created and confirmed in this area. When a subagent sends a message, an unread count shows up on the inbox line.

🎩 Mayor— Bottom-left status. The hat = Mayor role. The agents and rigs running below this — subagents, watchers — show up as their own emojis on this same line as they spin up.0/1 🦉— Witness — a per-rig health watcher that auto-spawns when the rig wakes up. The fraction isactive / instances:0/1means it exists but is idle;1/1while it’s actively checking on a subagent. You don’t summon it; you’ll just see it appear once a project starts working.1/1 🏭— Refinery — the town’s behind-the-scenes finisher. Cleans up and merges the artifacts your subagents produced once you’ve approved them.1/1= awake and ready. Sameactive / instancesshape as the witness. Auto-managed; Mayor talks to it, you don’t.🐺 Deacon— Deacon — town-level watchdog. Watches the Mayor and every rig at once; pings the Mayor when something looks off (a subagent stuck, a rig that should be alive but isn’t). You almost never interact with the Deacon directly — it’s the safety net.🟡 goals— A rig is one project’s private folder under~/gt/, with its own subagents. “goals” is the project name; the dot tells you, at a glance, whether that project’s subagents are alive. Green = subagents are humming. Yellow = something’s running but not fully staffed (one of two agents is up). Dark/grey = nothing happening in this project right now. Park (🅿️) = paused on purpose; docked (🛑) = stopped on purpose. Read it the way you read a stove burner — color tells you whether to walk over.

Sending your first task.

Attach with cd ~/gt && gt mayor attach. Mayor’s first move on every attach is to read your queue — that’s why it greets you with what’s pinned, what’s finished, and what’s stuck before asking what you want.

Type at the › cursor in plain English. There’s no “set task” command — describe what you want, and Mayor turns it into a task on its own. One or two sentences, ending with a save path if you want a written artifact:

Read the five customer interview transcripts in

~/Documents/interviews/. Pull the three most common complaints about onboarding, with one verbatim quote each. Save asinterview_themes.mdin this folder.

What happens next, step by step:

Mayor echoes the brief back and files it as a task — an id like

gt-abcshows up in chat as confirmation.Mayor dispatches a fresh subagent into the project’s folder. The folder’s status emoji on the bottom line turns green.

The subagent reads only what’s in that folder and writes its artifact there. It can’t see your desktop or any other folder.

Brief a second task while the first is running — it queues or runs in parallel depending on your subagent cap.

Close the laptop. The subagent keeps running in the background. The task queue and the mail to the Mayor are both durable. Re-attach hours later and it’s all there.

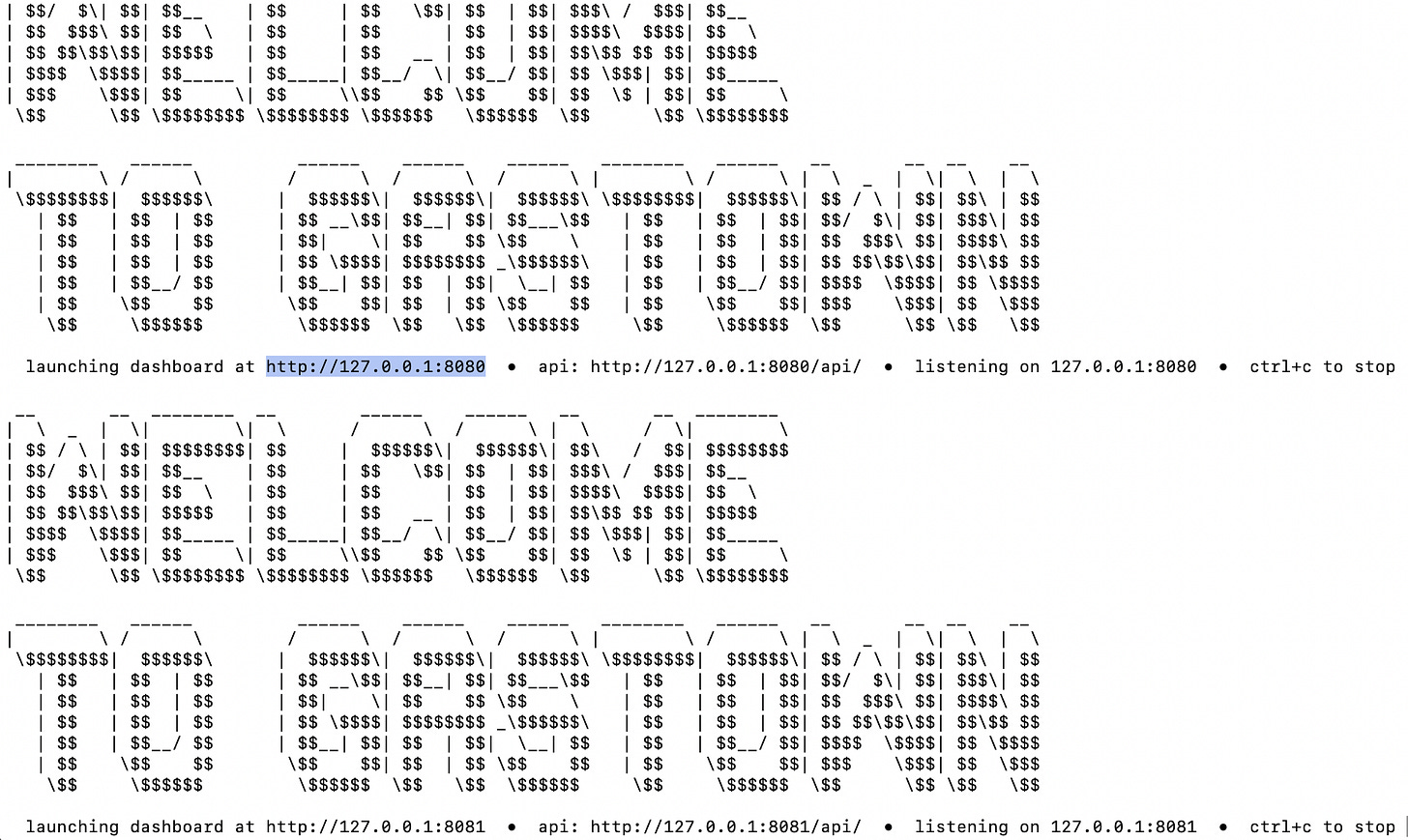

For a live view, open a second Terminal and run gt feed (text log) or gt dashboard (web view at localhost:8080).

When a subagent finishes, the artifact lands as a file in its project folder. Open it in TextEdit, Notion, whatever. To accept, do nothing. To reject, tell the Mayor: “The interview themes missed the pricing complaint in transcripts 2 and 4. Redo.” If the subagent is unfixable, say “this is unusable, try a totally different approach” — Mayor throws the subagent away and starts a new one with no memory of the failed attempt.

What you’d actually run through GT.

Use GT for work that needs walled-off parallel work, durability, or a gate between stages. Single-prompt work belongs in Claude.ai — GT is overhead for one-offs.

Quarterly competitor diligence sweep. Board prep on Monday. You need a comp matrix on five competitors — pricing pages, product changelogs, recent press, LinkedIn job posts, GitHub commits. Five briefs at once: one subagent per competitor, each in its own folder with its own slice of the prompt. They can’t see each other, can’t borrow each other’s framing, can’t converge into one mid-quality summary. A sixth subagent merges into the matrix. Drop the same job into a single Claude.ai window and the five blur — same writer, same context, one mid voice across all five. Walls force diversity.

Overnight investor pipeline triage. Friday end-of-day: 80 inbound investor cold emails sitting in a folder. Three sequential briefs: parse → score against your ICP and stage thesis → draft a personalized reply for the top decile. Brief at midnight; a plugin chains stage-to-stage when each one completes. Saturday morning, you open the project folder: 8 ready-to-send drafts, the rest tagged with a one-line reason. A single Claude.ai chat can’t run while you’re at dinner, and it can’t pass scored output from one stage into a draft-writing stage with a different prompt and a different reviewer.

How to operate the Mayor.

Brief like you mean it. The difference between a useful artifact and a wasted hour is three lines. A bad brief: “draft the launch FAQ.” A good brief: “Draft launch FAQ at work/launch/faq.md. Use the 12 transcripts in work/launch/transcripts/. Done when: 8 questions, each ≤80 words, citing transcript number. Working folder: work/launch/.” Artifact path, done-when, working folder. Without those three, the subagent invents its own and you can’t tell if it’s right.

Fire briefs in parallel, not in line. When you have four briefs that don’t touch each other, send them all at once: gt sling gt-101 gastown, gt sling gt-102 gastown, etc. Each spawns a fresh subagent in its own folder. They run concurrently, not queued. The cap is set by your scheduler.max_polecatsconfig, your account quota, and the system’s window limits. gt agents list shows who’s alive.

Redo, same subagent. When the artifact is close but wrong, ping the subagent: gt nudge greenplace/Toast "Redo. The themes missed the pricing complaint in transcripts 2 and 4." Same subagent, same context — it keeps what it learned and fixes the gap. Cheap, fast. Use 80% of the time.

Fresh subagent when the frame is wrong. If the subagent is fundamentally pointed wrong, don’t argue with it — throw it away. gt handoff <task-id> re-pins the work to a brand-new subagent with no prior memory. Use this when ping #2 still drifts.

Read two dashboards, not one. gt feed is the in-terminal log — subagents, work-in-flight, scrolling event stream. Glance every hour. gt dashboard is the web view at localhost:8080 — same picture in calmer pixels. Open it when you want an overview without a wall of events.

Walk away. Once a task is dispatched, you don’t need to babysit it. Leave the terminal window open and your laptop awake — go to lunch, switch tabs, take a call. Subagents keep running in the background and finish on their own. Re-attach with gt mayor attach when you want to check in.

(Closing the lid puts macOS to sleep and pauses the whole town. For overnight runs, turn on Settings → Battery → Prevent automatic sleep on power adapter when display is off.)

For you if you have repeatable knowledge work — drafts, summaries, scoring, research scans. Not for you if “open Terminal” is a hard no, or you don’t use Claude Code.

Want me to go deeper on Gas Town — install walkthrough with screenshots, the briefs I actually use, day-five reality check? Drop a comment and I’ll write the follow-up.

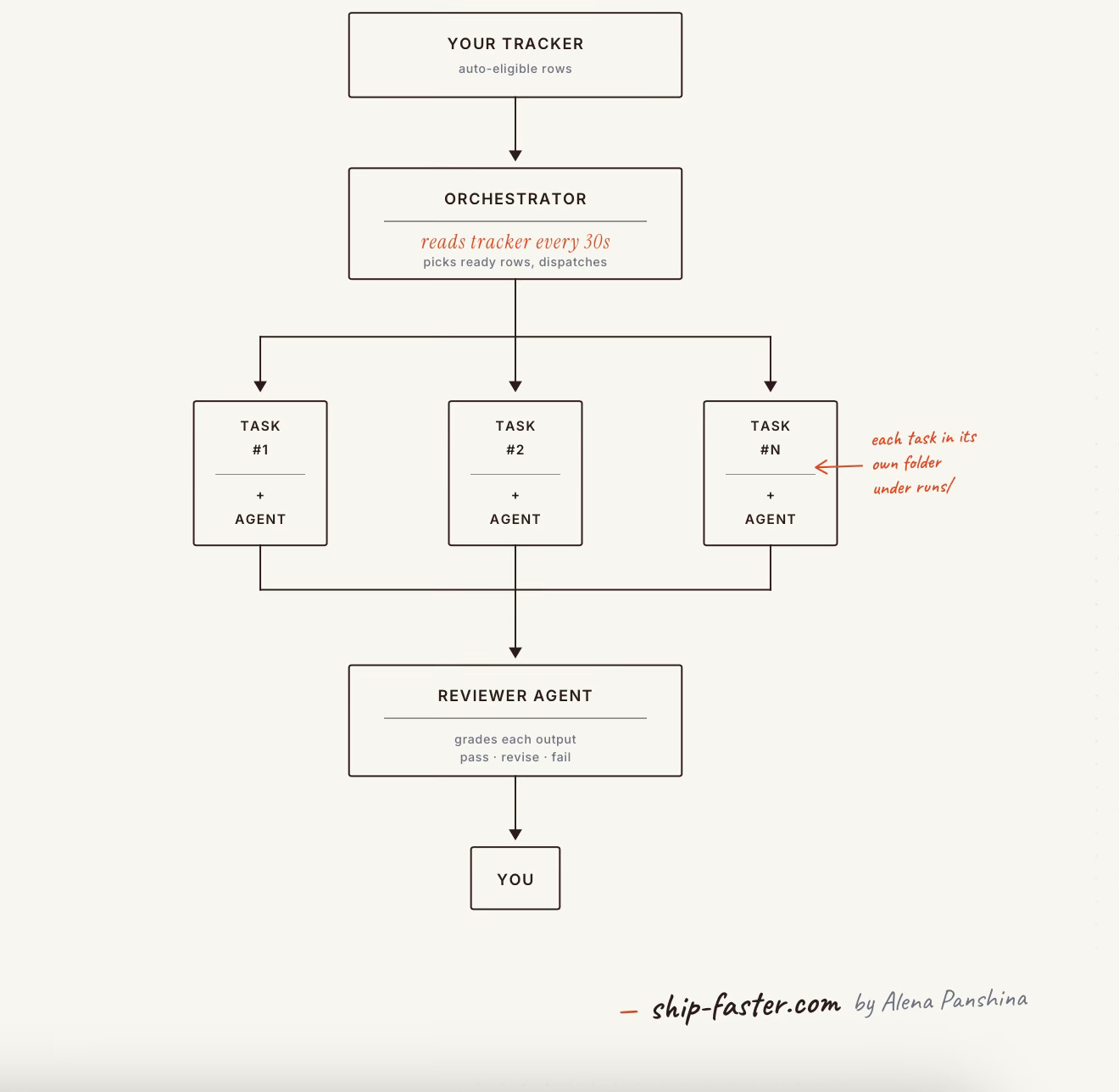

Framework 3 — Symphony — vibecode the watcher against your own tracker

Symphony vs Gas Town in one breath. Gas Town is a working supervisor you install on your laptop — a Mayor, subagents, walled-off folders, all wired and ready, but it owns the queue (the task list lives under ~/gt/). Symphony is a spec, not a binary — you vibecode the loop yourself against the tracker you already use (Notion, Airtable, Linear, sqlite). Pick GT if you want a town that runs today; pick Symphony if your work already lives in a tracker you don’t want to migrate from.

Symphony is OpenAI’s spec open-sourced on April 27, 2026 — a queue, a watcher, an isolated folder per task, a reviewer agent, a human at the end. Per the README: “Symphony turns project work into isolated, autonomous implementation runs, allowing teams to manage work instead of supervising coding agents.” The reference build is in Elixir (a programming language — you don’t have to use it; you’ll write your own version against your own tracker). 22.2k stars, 2.05k forks ten days in — but only a handful of contributions back yet. Admired, not adopted.

Here’s the move: the Symphony spec transfers as a concept, not as a thing you install. The concept is six pieces:

A queue. Rows in any tracker — Notion, Airtable, Linear, a Google Sheet, a single-file database called sqlite on your laptop — with three columns added:

brief(the prompt),done-when(the criterion),auto-eligible(a true/false checkbox you tick when a row is ready for an agent to take).A watcher. A small program — call it a watcher — that runs on your laptop and polls the tracker (checks it once every thirty seconds) for rows that are ready and ticked auto-eligible.

A folder per task. When the watcher picks up a row, it opens a fresh subfolder under

runs/<task-id>/and copies the brief in. The agent that runs in this folder can only read and write inside it.An agent. The watcher dispatches Claude (or any chat model) inside the folder. It reads the brief, does the work, writes an artifact to disk.

A reviewer. A second agent — same model, different prompt — reads the artifact, compares it to the

done-whenline, and returns one of three verdicts: pass, revise, fail.A pile of three outcomes. Pass → artifact goes to your output folder. Revise → row goes back to the queue with reviewer notes appended. Fail → row surfaces to you for a manual look.

You vibecode all six against the tracker you already use. Forty minutes with Cursor or Claude Code is enough. I vibecoded mine in ~150 lines of Python against goals.db (sqlite); two agents run at a time.

Setup — six steps to vibecode your own.

Pick your tracker. Whatever already holds your work: Notion DB, Airtable, Linear, Google Sheet, sqlite file. The one you already update without thinking. Don’t migrate.

Add three columns to it.

brief(the prompt),done-when(the criterion),auto-eligible(boolean). That’s the contract.Open Cursor or Claude Code in a new empty folder. Paste this watcher brief verbatim. (The thirty-second poll, the two-agent cap, and the auto-eligible/done-when columns aren’t in the OpenAI spec — they’re my interface choices in my variant. Adjust if you want.)

Build a watcher that polls my task tracker every thirty seconds. When a row has

auto-eligible = trueandstatus = ready, the watcher opens a fresh folder underruns/<task-id>/, drops the brief in, dispatches Claude Sonnet inside, and saves the output as an artifact file. A reviewer reads the artifact, compares it to the row’sdone-whenline, and returns one of three verdicts: pass, revise, fail. Cap two agents running at once. Pass moves the artifact to my output folder. Revise sends the row back to the queue with reviewer notes. Fail flags it for me. Build it in whatever language fits the tracker — Python against sqlite, JS against Notion API, whatever’s already in your stack.

Tell it your stack. One line: “Build it in Python against my Notion tracker at [URL] using the Notion API” — or “in Python against

~/goals.dbusing sqlite3” — or “in JS against Airtable base [ID]”.Test with one row. Write a real task into your tracker — title, brief, done-when, set auto-eligible = true. Run the watcher (Cursor will tell you

python watcher.pyor similar). Watch it open a folder underruns/<id>/, dispatch the agent, write the artifact, run it past the reviewer.Leave it running. A Terminal tab with the watcher polling. Add rows from your phone, your laptop, wherever you already update the tracker. The watcher picks them up within thirty seconds.

How it runs.

You add a row to your tracker — title, brief, done-when, auto-eligible = true. Within thirty seconds the watcher (which is already running in a Terminal tab) sees it. The watcher creates runs/<task-id>/, copies the brief in, fires up a Claude Sonnet agent with that folder as its working directory. The agent reads the brief, does the work, writes its output to disk. The watcher hands that output to a second Claude — the reviewer — with one question: does this satisfy the done-when line? The reviewer returns pass, revise, or fail. Pass-artifacts move to your output folder; revise-rows go back to the queue with the reviewer’s notes appended; fail-rows surface for you to look at.

Until you check the tracker, you see nothing. When you check, you see only the pile of three: passed (look at if you want; otherwise ship), revised (queued again, no action), failed (your turn).

The “done when” line is your editorial unit. Your developer can build the loop; only you can write the criterion that decides what good looks like. Four from my own queue:

Customer-feedback synthesis. Done when: 5+ source emails clustered into ≤3 themes, each theme has 2 verbatim quotes, top theme tagged ship-this-week with a named owner.

Weekly investor update. Done when: ≤300 words, three numbers (this week vs last), one named risk, one specific ask with a date.

Inbox triage. Done when: every item tagged in one of {ship-today, this-week, next-month, drop} with a one-line reason; nothing left untagged.

LinkedIn post draft. Done when: ≤220 words, one specific receipt (date, name, or number) in the first three lines, one provocation in the last line, no list of three, no “in 2026” opener.

Same rule from §3 applies here, sharper: if you can’t write the done-when line in a sentence, the task isn’t ready to be automated. The criterion is the contract — without it, Symphony is a way to fail at scale.

For you if you already run multiple long-running agent jobs against a tracker you control and want them dispatched while you sleep. Not for you if “open Cursor and write a 150-line watcher” is a hard no — pick Lindy, or stop at Framework 2.

Want me to go deeper on Symphony — my 150-line Python watcher annotated, the done-when patterns that work, the ones that don’t? Drop a comment and I’ll write the follow-up.

§6 — Three things to avoid even with an orchestrator

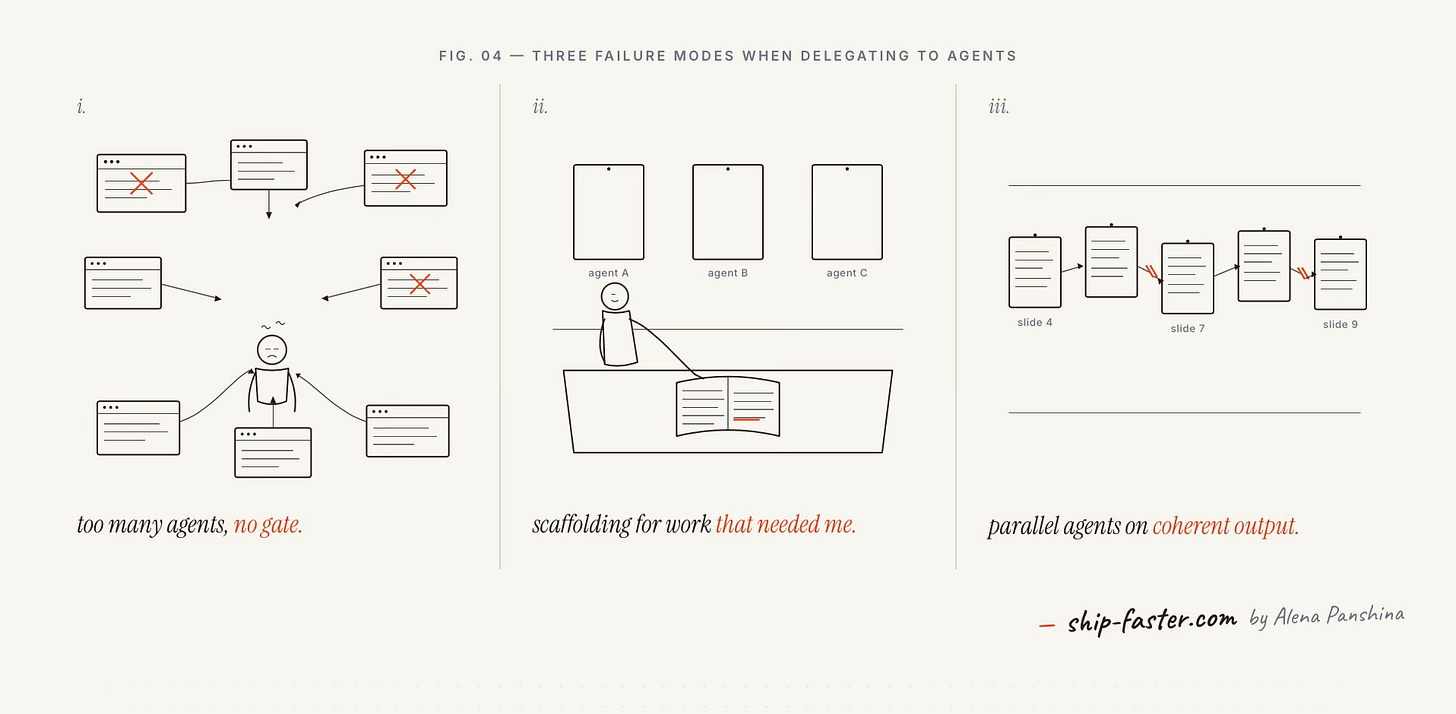

The orchestrator doesn’t fix everything. Three failure modes I still hit, all from one project — the DeclAI investor deck I was building six days ago.

Avoid 1 — Too many agents at once, no supervision capacity

Receipt: §1’s 11am scene. Eight agents open. The competitor research one slipped, I clicked accept, bad output flowed downstream into three things at once.

Recovery, in my world: “Breath in, breath out. Reduced number of active tasks (turn off TikTok), and started from the beginning.”

Avoid by: a review gate on every agent before its output flows to another agent. One sentence: “Does this match the actual ICP?” One yes/no. The gate is cheap. The downstream rework is not. (Simon Willison has argued the engineering version of this for two years: once one agent’s output is consumed by another, the trust boundary is the gate, not the human at the end.)

Avoid 2 — Building scaffolding for work that needed me

Receipt: same project, slide-order phase. I created three different agents to draft the deck structure. None of them produced anything I shipped. My own line, verbatim: “Oh, with pitch deck — I created 3 different agents, but needed just do it myself, except small pieces.”

A friend in a private founders’ channel put the pattern cleanly: “So many people spending hours on Claude Code SDK for automation which exceeds the amount of time it would have taken for them to do it manually.” Productivity theater. The deck wanted me — not three agents.

Avoid by: sixty seconds with a notebook before dispatching any agent. If you can’t write the test, you do the work.

Avoid 3 — Parallel agents on coherent output

Receipt: same project, Day 3. I tried parallel pitch deck creation — all slides written by parallel agents — and ended up with twelve broken slides. Slide 4 calls back to slide 2. Slide 7 sets up slide 9. Parallel agents wrote each slide to its own local optimum.

What would have caught it: the agent-tag rule from Gas Town — research-tagged tasks fan out, writing-tagged tasks run solo, because written output needs coherence. The framework encodes this so I don’t have to remember it under fatigue.

Avoid by: a single writer agent with the full deck outline, sequentially. Twelve slides in twelve passes, each pass aware of every prior slide. The wall-clock penalty for sequential is 4x. The output penalty for parallel is rewriting all twelve slides. The math is not close.

§7 — Close

Orchestration isn’t the framework. It’s the habit.

Every time you pick up a task this week, run a one-question check: is this separable? If three contractors could divide it without stepping on each other, three agents can. That’s the work for the queue. If it has to come out of one head — one slide deck, one keynote, one apology email — keep it. The framework can’t fix coherence.

The discipline is small and constant. Notice when you’re doing the supervisor’s job by hand. Notice when you’ve opened the fifth tab. Notice when you’re hitting accept without reading. That’s the moment to file the task, not push through it.

The philosophy is one line: stop being the person who does the separable work. Become the person who briefs it, gates it, and ships what passes.

Pick the framework that suits you or create your own. Wire it to the tracker you already use. Write the done-whenline for one task. Run it. Review at the gate. Run another. Then notice the next task you’d otherwise have done by hand.

Tell me in the comments: what’s the first separable task you’re filing this week, and which tool already holds your queue?

P.S. — If you want the prequel, I wrote about why the harness layer mattered more than the model in I rebuilt the AI agent 10 times. Open and honest about what didn’t work.

Thank you to my founding members — Kostas Nasis, Artem Krivonos, and Kristina Hananeina. Your early support made this newsletter real.

Wow I'm installing gustown today